February 14th, 2025

API

App

Models

Venice.ai Change Log - February 14, 2025

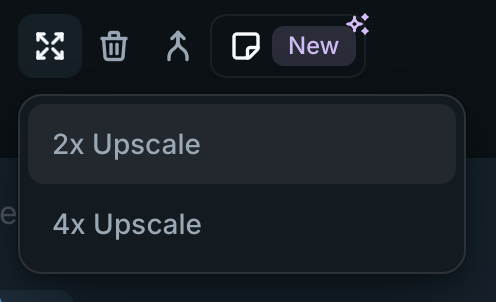

App

Added “upscale menu” providing 2x and 4x upscale options for Pro users.

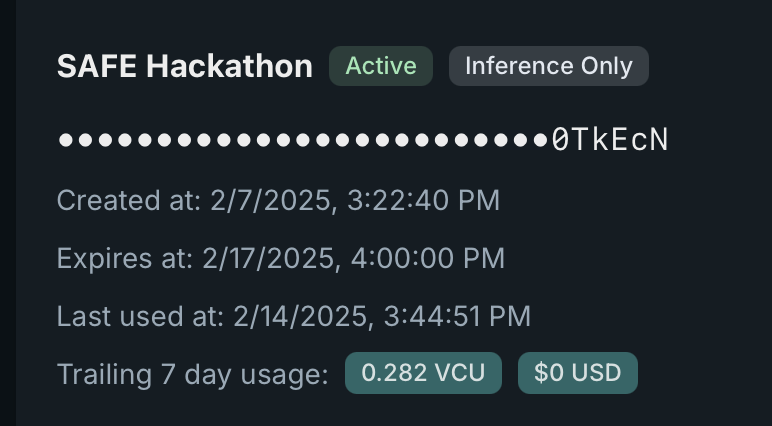

API Dashboard

The API key dashboard now shows the last date an API key was used, and the trailing 7 day usage in USD and VCU.

API

Added support for

scaleparameter when upscaling. Available options are2and4.Postman has been updated.

Added API traits endpoint at

/api/v1/models/traits— Postman has been updated.Add new

traitson models to map OpenAI’s models to Venice’s current model fleet. The current mapping is as follows. Postman has been updated.

{

"function_calling_default": [

"llama-3.3-70b"

],

"default": [

"llama-3.3-70b"

],

"o1-mini": [

"llama-3.3-70b"

],

"o1-mini-2024-09-12": [

"llama-3.3-70b"

],

"o3-mini": [

"llama-3.3-70b"

],

"chatgpt-4o-latest": [

"llama-3.3-70b"

],

"gpt-4o-mini": [

"llama-3.3-70b"

],

"gpt-4o-mini-2024-07-18": [

"llama-3.3-70b"

],

"claude-3-5-haiku-20241022": [

"llama-3.3-70b"

],

"claude-3-haiku-20240307": [

"llama-3.3-70b"

],

"gpt-3.5-turbo-1106": [

"llama-3.3-70b"

],

"gpt-3.5-turbo-instruct": [

"llama-3.3-70b"

],

"gpt-3.5-turbo-instruct-0914": [

"llama-3.3-70b"

],

"gpt-3.5-turbo-0125": [

"llama-3.3-70b"

],

"gpt-3.5-turbo": [

"llama-3.3-70b"

],

"gpt-3.5-turbo-16k": [

"llama-3.3-70b"

],

"gpt-4o": [

"llama-3.3-70b"

],

"gpt-4o-2024-08-06": [

"llama-3.3-70b"

],

"gpt-4o-2024-05-13": [

"llama-3.3-70b"

],

"gpt-4o-2024-11-20": [

"llama-3.3-70b"

],

"claude-3-5-sonnet-20241022": [

"llama-3.3-70b"

],

"claude-3-5-sonnet-20240620": [

"llama-3.3-70b"

],

"fastest": [

"llama-3.2-3b"

],

"most_uncensored": [

"dolphin-2.9.2-qwen2-72b"

],

"most_intelligent": [

"llama-3.1-405b"

],

"o1": [

"llama-3.1-405b"

],

"o1-preview": [

"llama-3.1-405b"

],

"o1-preview-2024-09-12": [

"llama-3.1-405b"

],

"claude-3-opus-20240229": [

"llama-3.1-405b"

],

"default_code": [

"qwen32b"

]

}Model Updates

Adjust Qwen VL context down to ~32k tokens to resolve memory issues with server infrastructure.