𝗦𝗲𝗮𝗿𝗰𝗵-𝗥𝟭 – the first 𝗿𝗲𝗽𝗿𝗼𝗱𝘂𝗰𝘁𝗶𝗼𝗻 𝗼𝗳 𝗗𝗲𝗲𝗽𝘀𝗲𝗲𝗸-𝗥𝟭 (𝘇𝗲𝗿𝗼) with reinforcement learning

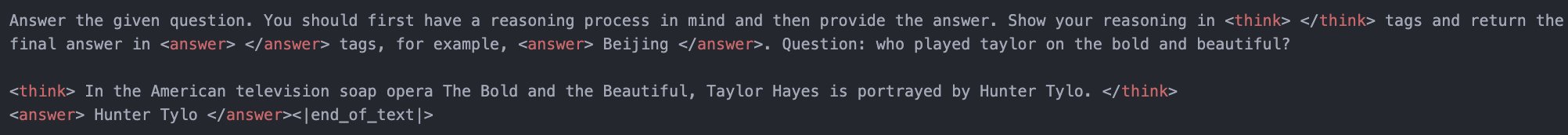

What do you think of something like this?

For training reasoning and search-augmented LLM agents with reinforcement learning.

This is a step towards training an 𝗼𝗽𝗲𝗻-𝘀𝗼𝘂𝗿𝗰𝗲 𝗢𝗽𝗲𝗻𝗔𝗜 “𝗗𝗲𝗲𝗽 𝗿𝗲𝘀𝗲𝗮𝗿𝗰𝗵” via RL.

𝟯𝗕 𝗯𝗮𝘀𝗲 𝗟𝗟𝗠𝘀—including not just 𝗤𝘄𝗲𝗻 𝟮.𝟱 but also 𝗟𝗹𝗮𝗺𝗮 𝟯.𝟮—learn to 𝗿𝗲𝗮𝘀𝗼𝗻 and 𝗰𝗮𝗹𝗹 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲𝘀 all on their own.

We follow Deepseek R1-zero, starting with a base LLM, prompts, and ground-truth rewards. Then, we apply 𝗿𝗲𝗶𝗻𝗳𝗼𝗿𝗰𝗲𝗺𝗲𝗻𝘁 𝗹𝗲𝗮𝗿𝗻𝗶𝗻𝗴 (RL). Our experiments are conducted on 𝗡𝗮𝘁𝘂𝗿𝗮𝗹 𝗤𝘂𝗲𝘀𝘁𝗶𝗼𝗻𝘀 (𝗡𝗤), a factual QA dataset in which LLMs struggle with direct answers, making search engine calls crucial. The only supervision? A 𝗿𝘂𝗹𝗲-𝗯𝗮𝘀𝗲𝗱 𝗼𝘂𝘁𝗰𝗼𝗺𝗲 𝗿𝗲𝘄𝗮𝗿𝗱 (string exact match) to determine correctness.

We first experiment with 𝗥𝗟 𝘁𝘂𝗻𝗶𝗻𝗴 𝑤𝑖𝑡ℎ𝑜𝑢𝑡 search engine access, letting the 𝗟𝗟𝗠 (𝗟𝗹𝗮𝗺𝗮 𝟯.𝟮-𝟯𝗕-𝗯𝗮𝘀𝗲) answer questions on its own. Initially, the model produces 𝗱𝘂𝗺𝗺𝘆 𝗼𝘂𝘁𝗽𝘂𝘁𝘀, but through RL, it 𝗴𝗿𝗮𝗱𝘂𝗮𝗹𝗹𝘆 𝗹𝗲𝗮𝗿𝗻𝘀 to generate meaningful answers.

Next, we 𝗶𝗻𝘀𝘁𝗿𝘂𝗰𝘁 the 𝗟𝗟𝗠 (𝗟𝗹𝗮𝗺𝗮 𝟯.𝟮-𝟯𝗕-𝗯𝗮𝘀𝗲) that it can 𝗰𝗮𝗹𝗹 𝗮 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲 to retrieve relevant information. 𝗦𝘂𝗿𝗽𝗿𝗶𝘀𝗶𝗻𝗴𝗹𝘆, even 𝘄𝗶𝘁𝗵𝗼𝘂𝘁 any supervised fine-tuning (SFT), the 𝗯𝗮𝘀𝗲 𝗟𝗟𝗠 𝗹𝗲𝗮𝗿𝗻𝘀 𝘁𝗼 𝗰𝗮𝗹𝗹 𝘁𝗵𝗲 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲, 𝗶𝗻𝘁𝗲𝗿𝗽𝗿𝗲𝘁 𝘀𝗲𝗮𝗿𝗰𝗵 𝗿𝗲𝘀𝘂𝗹𝘁𝘀, 𝗮𝗻𝗱 𝗮𝗻𝘀𝘄𝗲𝗿 𝗾𝘂𝗲𝘀𝘁𝗶𝗼𝗻𝘀—𝗮𝗹𝗹 𝘁𝗵𝗿𝗼𝘂𝗴𝗵 𝗥𝗟!

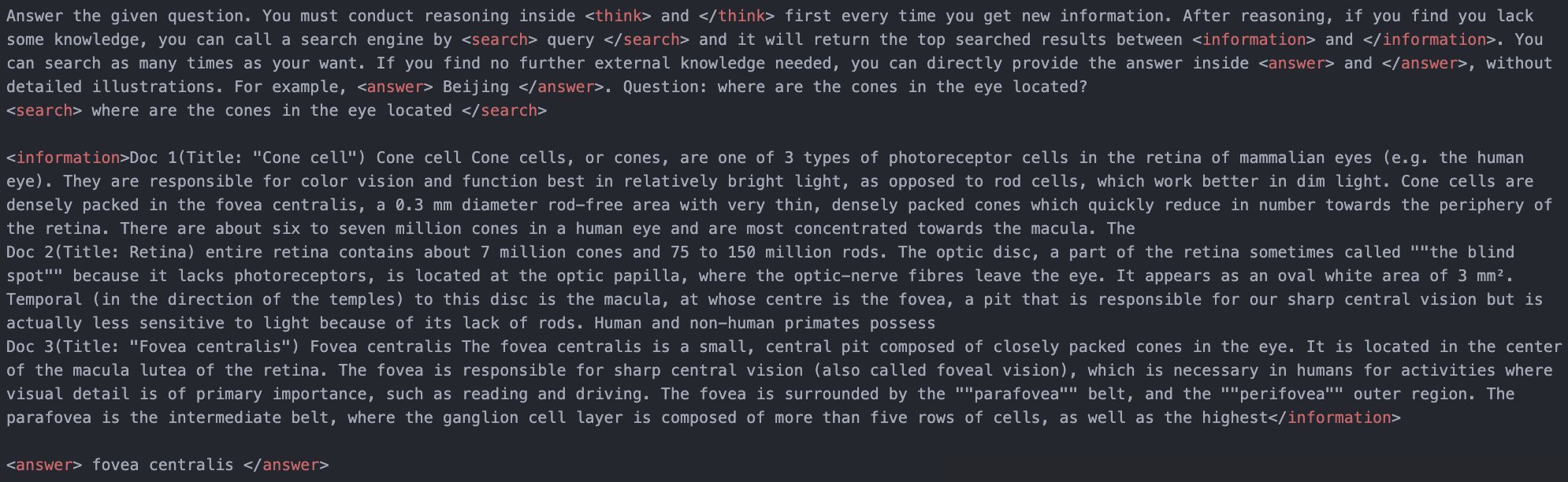

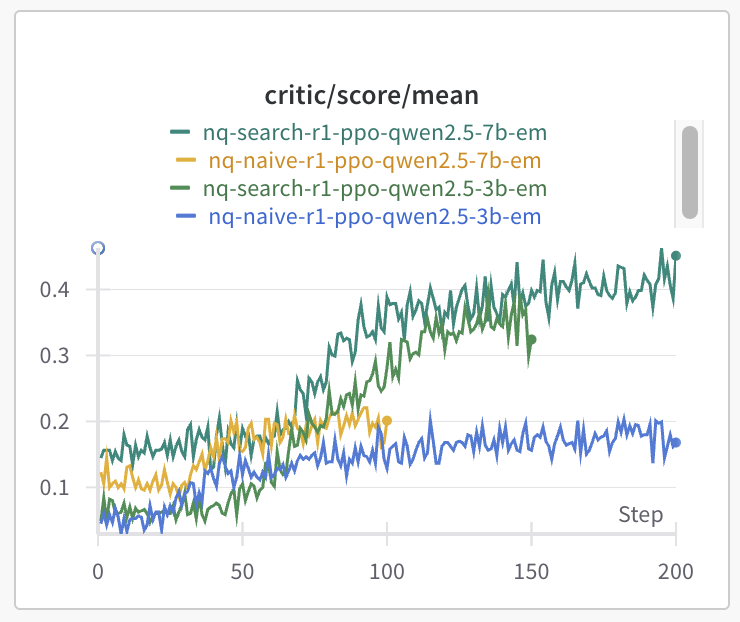

We compare the performance of the 𝗟𝗟𝗠 𝘄𝗶𝘁𝗵𝗼𝘂𝘁 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲 𝗮𝗰𝗰𝗲𝘀𝘀 vs. 𝗟𝗟𝗠 𝘄𝗶𝘁𝗵 𝘀𝗲𝗮𝗿𝗰𝗵-𝗮𝘂𝗴𝗺𝗲𝗻𝘁𝗲𝗱 𝗹𝗲𝗮𝗿𝗻𝗶𝗻𝗴 via RL. The 𝘀𝗲𝗮𝗿𝗰𝗵-𝗲𝗻𝗮𝗯𝗹𝗲𝗱 𝗺𝗼𝗱𝗲𝗹 𝘄𝗶𝗻𝘀!

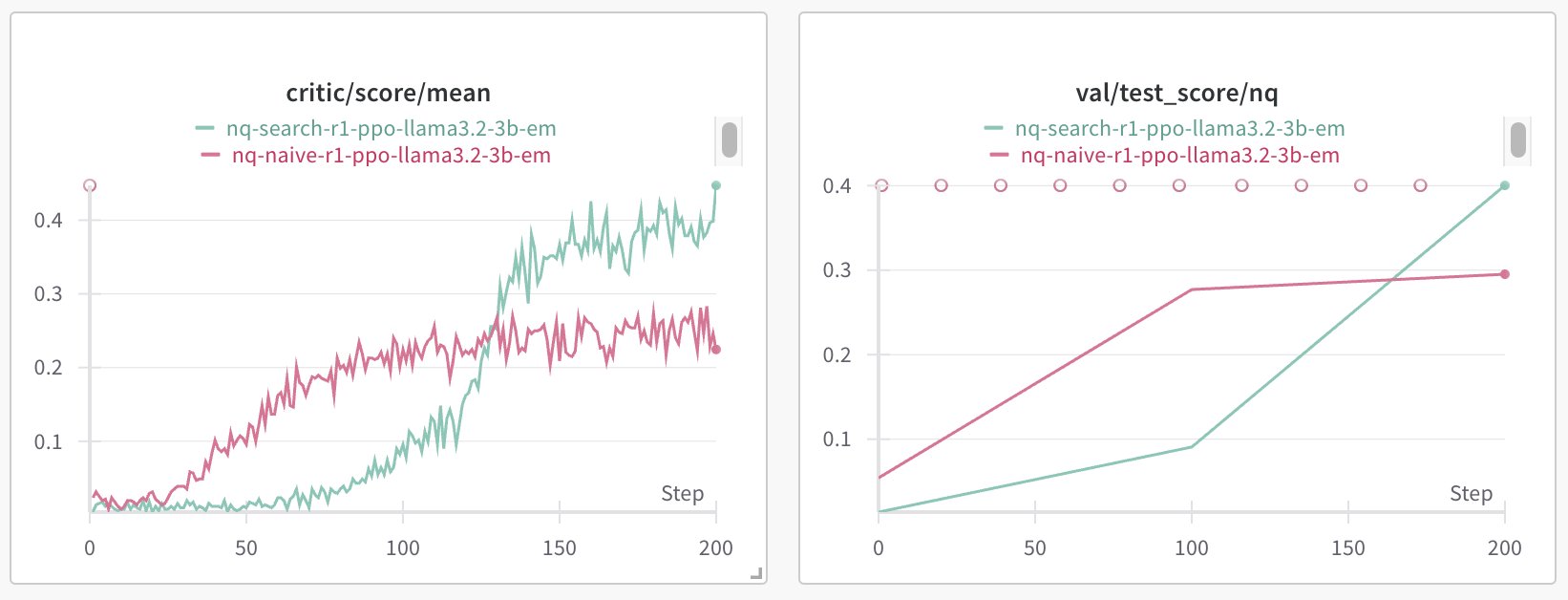

When training 𝗟𝗹𝗮𝗺𝗮 𝟯.𝟮-𝟯𝗕-𝗯𝗮𝘀𝗲 with 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲 𝗰𝗮𝗹𝗹𝗶𝗻𝗴, the response length follows an interesting trend:

𝗙𝗶𝗿𝘀𝘁, 𝗶𝘁 𝗱𝗲𝗰𝗿𝗲𝗮𝘀𝗲𝘀—the model learns to 𝗮𝘃𝗼𝗶𝗱 𝗲𝘅𝗰𝗲𝘀𝘀𝗶𝘃𝗲 𝗱𝘂𝗺𝗺𝘆 𝘄𝗼𝗿𝗱𝘀. 𝗧𝗵𝗲𝗻, 𝗶𝘁 𝗶𝗻𝗰𝗿𝗲𝗮𝘀𝗲𝘀—as it learns to 𝗰𝗮𝗹𝗹 𝘁𝗵𝗲 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲 𝗮𝗻𝗱 𝗿𝗲𝗮𝘀𝗼𝗻 effectively. Since 𝗡𝗤 𝗶𝘀 𝗮 𝗿𝗲𝗹𝗮𝘁𝗶𝘃𝗲𝗹𝘆 𝘀𝗶𝗺𝗽𝗹𝗲 𝘁𝗮𝘀𝗸, the response length 𝘀𝘁𝗮𝗯𝗶𝗹𝗶𝘇𝗲𝘀 𝗮𝘁 ~𝟱𝟬𝟬 𝘁𝗼𝗸𝗲𝗻𝘀.

We experiment with 𝗤𝘄𝗲𝗻𝟮.𝟱-𝟯𝗕-𝗯𝗮𝘀𝗲 and 𝗤𝘄𝗲𝗻𝟮.𝟱-𝟳𝗕-𝗯𝗮𝘀𝗲 under both with/without search engine RL settings. 𝗜𝘁 𝘄𝗼𝗿𝗸𝘀 𝗳𝗼𝗿 𝗯𝗼𝘁𝗵! Interestingly, in the 𝘀𝗲𝗮𝗿𝗰𝗵-𝗮𝘂𝗴𝗺𝗲𝗻𝘁𝗲𝗱 𝘀𝗲𝘁𝘁𝗶𝗻𝗴, the 𝟯𝗕 𝗺𝗼𝗱𝗲𝗹 𝗮𝗰𝗵𝗶𝗲𝘃𝗲𝘀 𝗽𝗲𝗿𝗳𝗼𝗿𝗺𝗮𝗻𝗰𝗲 𝗰𝗼𝗺𝗽𝗮𝗿𝗮𝗯𝗹𝗲 𝘁𝗼 𝘁𝗵𝗲 𝟳𝗕 𝗺𝗼𝗱𝗲𝗹. 𝗛𝘆𝗽𝗼𝘁𝗵𝗲𝘀𝗶𝘀: When an 𝗟𝗟𝗠 𝗶𝘀 𝗰𝗼𝗻𝗻𝗲𝗰𝘁𝗲𝗱 𝘁𝗼 𝗲𝘅𝘁𝗲𝗿𝗻𝗮𝗹 𝗶𝗻𝗳𝗼𝗿𝗺𝗮𝘁𝗶𝗼𝗻, its 𝗿𝗲𝗮𝘀𝗼𝗻𝗶𝗻𝗴 𝗮𝗯𝗶𝗹𝗶𝘁𝘆 𝗺𝗮𝘆 𝗻𝗼𝘁 𝗻𝗲𝗰𝗲𝘀𝘀𝗮𝗿𝗶𝗹𝘆 𝗿𝗲𝗾𝘂𝗶𝗿𝗲 𝗮 𝗹𝗮𝗿𝗴𝗲 𝗺𝗼𝗱𝗲𝗹 𝘀𝗶𝘇𝗲.

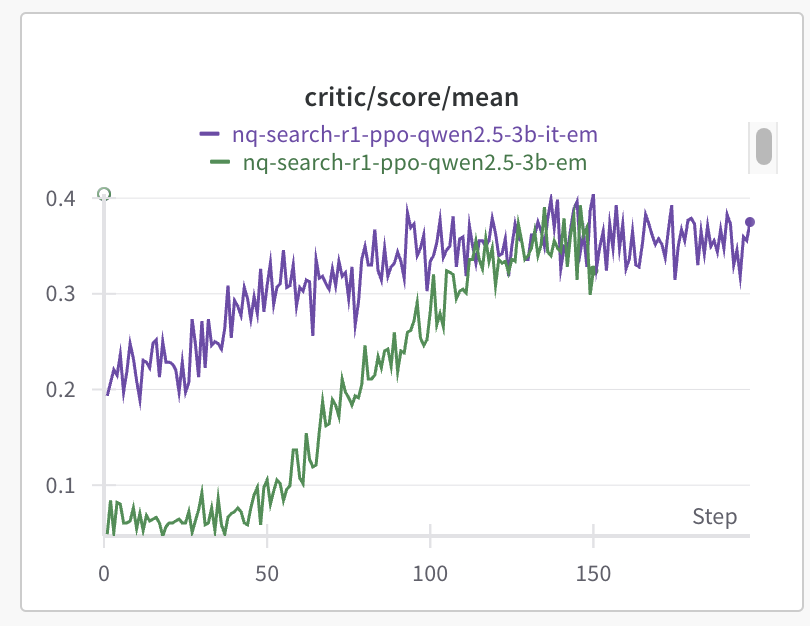

𝗕𝗼𝘁𝗵 𝗯𝗮𝘀𝗲 𝗮𝗻𝗱 𝗶𝗻𝘀𝘁𝗿𝘂𝗰𝘁𝗶𝗼𝗻 𝗺𝗼𝗱𝗲𝗹𝘀 𝘄𝗼𝗿𝗸! The 𝗶𝗻𝘀𝘁𝗿𝘂𝗰𝘁𝗶𝗼𝗻 𝗺𝗼𝗱𝗲𝗹 converges 𝗳𝗮𝘀𝘁𝗲𝗿 and starts from 𝗮 𝗯𝗲𝘁𝘁𝗲𝗿 𝗶𝗻𝗶𝘁𝗶𝗮𝗹 𝗽𝗲𝗿𝗳𝗼𝗿𝗺𝗮𝗻𝗰𝗲. However, the 𝗳𝗶𝗻𝗮𝗹 𝗽𝗲𝗿𝗳𝗼𝗿𝗺𝗮𝗻𝗰𝗲 of both models is 𝘃𝗲𝗿𝘆 𝘀𝗶𝗺𝗶𝗹𝗮𝗿. This suggests that while 𝗶𝗻𝘀𝘁𝗿𝘂𝗰𝘁𝗶𝗼𝗻 𝘁𝘂𝗻𝗶𝗻𝗴 𝗮𝗰𝗰𝗲𝗹𝗲𝗿𝗮𝘁𝗲𝘀 𝗹𝗲𝗮𝗿𝗻𝗶𝗻𝗴, 𝗿𝗲𝗶𝗻𝗳𝗼𝗿𝗰𝗲𝗺𝗲𝗻𝘁 𝗹𝗲𝗮𝗿𝗻𝗶𝗻𝗴 𝗰𝗮𝗻 𝗯𝗿𝗶𝗱𝗴𝗲 𝘁𝗵𝗲 𝗴𝗮𝗽 𝗼𝘃𝗲𝗿 𝘁𝗶𝗺𝗲.

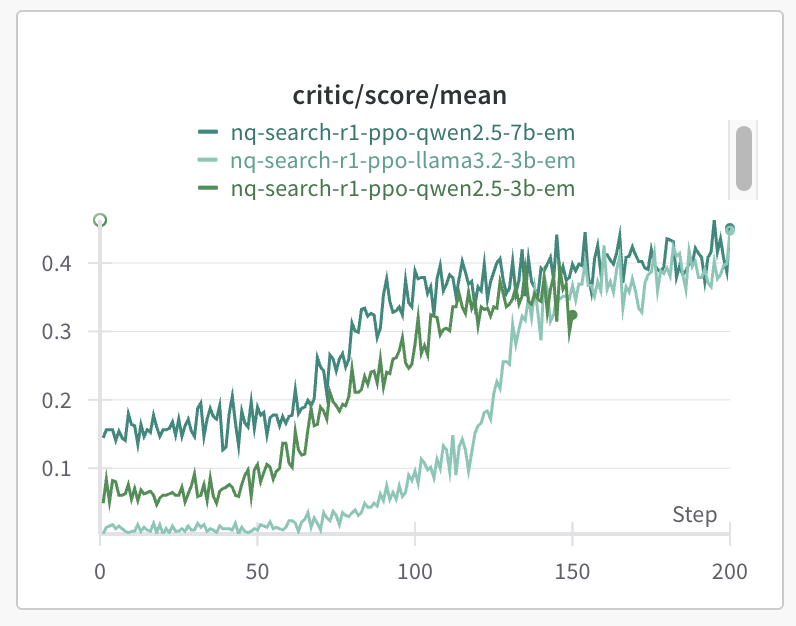

We experiment with 𝗤𝘄𝗲𝗻𝟮.𝟱-𝟯𝗕-𝗯𝗮𝘀𝗲, 𝗟𝗹𝗮𝗺𝗮𝟯.𝟮-𝟯𝗕-𝗯𝗮𝘀𝗲, 𝗮𝗻𝗱 𝗤𝘄𝗲𝗻𝟮.𝟱-𝟳𝗕-𝗯𝗮𝘀𝗲—and 𝘁𝗵𝗲𝘆 𝗮𝗹𝗹 𝘄𝗼𝗿𝗸! This is 𝗻𝗼𝘁𝗮𝗯𝗹𝘆 𝗱𝗶𝗳𝗳𝗲𝗿𝗲𝗻𝘁 𝗳𝗿𝗼𝗺 𝗺𝗮𝘁𝗵 𝗿𝗲𝗮𝘀𝗼𝗻𝗶𝗻𝗴, where only the 𝗤𝘄𝗲𝗻𝟮.𝟱 𝘀𝗲𝗿𝗶𝗲𝘀 models succeed.

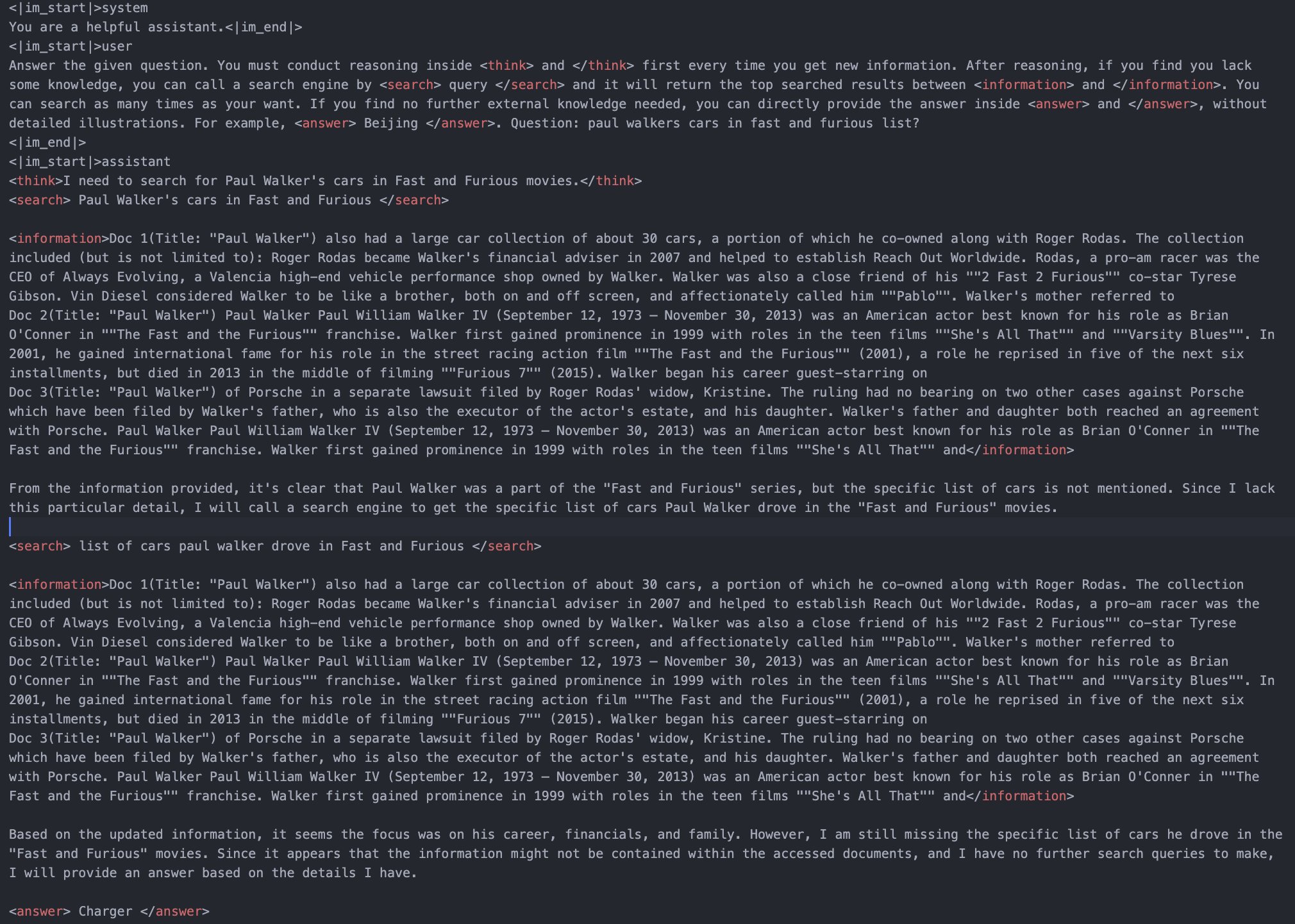

The 𝗟𝗟𝗠 𝗹𝗲𝗮𝗿𝗻𝘀 𝘁𝗼 𝗽𝗲𝗿𝗳𝗼𝗿𝗺 𝗺𝘂𝗹𝘁𝗶-𝘁𝘂𝗿𝗻 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲 𝗰𝗮𝗹𝗹𝘀, refining its queries step by step to gather more relevant information. This showcases its ability to 𝗶𝘁𝗲𝗿𝗮𝘁𝗶𝘃𝗲𝗹𝘆 𝗶𝗺𝗽𝗿𝗼𝘃𝗲 𝗿𝗲𝘁𝗿𝗶𝗲𝘃𝗮𝗹 𝗮𝗻𝗱 𝗿𝗲𝗮𝘀𝗼𝗻𝗶𝗻𝗴—a key capability for real-world research agents!

Our framework supports 𝗳𝗹𝗲𝘅𝗶𝗯𝗹𝗲 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲 𝗰𝗵𝗼𝗶𝗰𝗲𝘀, including: 𝗟𝗼𝗰𝗮𝗹 𝗿𝗲𝘁𝗿𝗶𝗲𝘃𝗲𝗿𝘀 (sparse/dense) 𝗢𝗻𝗹𝗶𝗻𝗲 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲𝘀 (Google, Bing, etc.) 𝗖𝘂𝘀𝘁𝗼𝗺 𝘀𝗲𝗮𝗿𝗰𝗵 𝗲𝗻𝗴𝗶𝗻𝗲𝘀—Launch your own on any corpus and integrate it with RL effortlessly!

The pipeline is based on verl (https://github.com/volcengine/verl), a highly efficient RL framework.

Fully open source

Please authenticate to join the conversation.

Rejected

Feature Requests

Web Search

About 1 year ago

JaeSwift

Subscribe to post

Get notified by email when there are changes.

Rejected

Feature Requests

Web Search

About 1 year ago

JaeSwift

Subscribe to post

Get notified by email when there are changes.